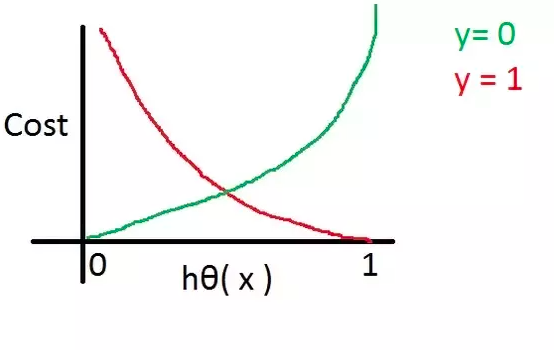

By fitting the loss function, It also increases the distance between classes to a certain extent. Predicted as bad melon (0) The probability is 1 − P 1-P 1−P: P ( y = 0 ∣ x ) = 1 − P P(y=0|x)=1-P P(y=0īe, P ( y ∣ x ) = P y ( 1 − P ) 1 − y P(y|x)=P^y(1-P)^pi,k Denotes the second i i i The second sample is predicted to be the third k k k The probability of a tag value. But as mis-predictions are severely penalizing the model somewhat learns to classify properly. So Ill keep letting my son increase the entropy. It does not take into account that the output is a one-hot coded and the sum of the predictions should be 1. Similarly, cross entropy can be used also as a loss function in artificial cross entropy neural network. It is predicted to be a good melon (1) The probability is P P P: P ( y = 1 ∣ x ) = P P(y=1|x)=P P(y=1∣x)=P, Binary cross-entropy loss should be used with sigmod activation in the last layer and it severely penalizes opposite predictions. In the case of dichotomy, Only two values can be predicted for each category, Suppose the prediction is good melon (1) The probability is P P P, Bad melon (0) The probability is 1 − P 1-P 1−P:īe, The general form of cross entropy loss is, among y Label : As this suggests, you have two probability measures interacting. The cross entropy loss function is the most commonly used loss function in classification, Cross entropy is used to measure the difference between two probability distributions, It is used to measure the difference between the learned distribution and the real distribution. The Cross entropy is a form of Mutual information, which is in turn computed from two entropies. In particular the categorical cross entropy is used as the. * Multiclassification : Such as judging a watermelon variety, Black Beauty, Te Xiaofeng, Annong 2, etc The (mean) cross entropy is a measure of difference between two discrete probability distributions. * Dichotomy : For example, judge whether a watermelon is good or bad Cross entropy indicates the distance between what the model believes the output distribution should be, and what the original distribution. * regression : The target variable is continuous, Such as predicting the sugar content of watermelon (0.00~1.00) * classification : The target variable is discrete, For example, judge whether a watermelon is good or bad, Then the target variable can only be 1( Good melon ),0( Bad melon ) Supervised learning is mainly divided into two categories : Our analysis sheds light on the behavior of that loss function and explains its superior performance on binary labeled data over data with graded relevance.Cross entropy loss function (CrossEntropy Loss)( Principle explanation ) In particular, we show that ListNet's loss bounds Mean Reciprocal Rank as well as Normalized Discounted Cumulative Gain. The only difference between original Cross-Entropy Loss and Focal Loss are these hyperparameters: alpha ( alpha ) and gamma ( gamma ). In fact, we establish an analytical connection between softmax cross entropy and two popular ranking metrics in a learning-to-rank setup with binary relevance labels. I have not been able to find out how to calculate the loss between each column of a ypredict and ytarget matrix as a GPU operation. In this work, however, we show that the above statement is not entirely accurate. This loss was designed to capture permutation probabilities and as such is considered to be only loosely related to ranking metrics. As mentioned above, the Cross entropy is the summation of KL Divergence and Entropy. One such loss ListNet's which measures the cross entropy between a distribution over documents obtained from scores and another from ground-truth labels. If you are training a binary classifier, chances are you are using binary cross-entropy / log loss as your loss function. The Cross-Entropy Loss function is used as a classification Loss Function. Cross entropy loss can be defined as- CE (A,B) x p (X) log (q (X)) When the predicted class and the training class have the same probability distribution the class entropy will be ZERO. This gap has given rise to a large body of research that reformulates the problem to fit into existing machine learning frameworks or defines a surrogate, ranking-appropriate loss function. Cross entropy as a loss function can be used for Logistic Regression and Neural networks. The main aim of these tasks is to answer a question with only two. One of the challenges of learning-to-rank for information retrieval is that ranking metrics are not smooth and as such cannot be optimized directly with gradient descent optimization methods. Binary cross-entropy is a loss function that is used in binary classification problems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed